The shift toward headless architecture has reshaped how developers build AI-powered applications. Instead of monolithic tools that lock you into a single interface, headless AI workflow platforms expose APIs and modular pipelines that let you orchestrate generative models, data transformations, and deployment logic from any frontend or backend. For teams shipping AI-powered creative tools in production, the platform choice directly affects iteration speed, cost, and scalability.

This guide breaks down the platforms that actually deliver on the headless promise in 2026, with a focus on real API access, self-hosting options, and integration flexibility. Whether you are building image generation pipelines, video processing chains, or multi-model orchestration layers, these are the tools worth evaluating.

What Makes a Workflow Platform "Headless"

A headless AI workflow platform separates the execution engine from the user interface. You get an API-first backend that processes requests, chains model calls, handles retries, and returns structured outputs. The frontend is yours to build however you want, whether that means a custom dashboard, a model exploration interface, or a CLI tool.

This matters because most AI applications need custom UIs. A drag-and-drop canvas is great for prototyping, but production workloads need programmatic triggers, webhook integrations, and the ability to embed AI steps into existing product flows. Platforms that offer workflow building capabilities alongside API access give teams the best of both worlds.

Key criteria for evaluation:

- REST or GraphQL API with full workflow execution support

- Self-hosting or hybrid deployment options

- Model-agnostic (connects to OpenAI, Anthropic, Stability, FAL, Replicate, etc.)

- Version control for workflow definitions

- Observability and logging built in

n8n: The Open-Source Standard

n8n has become the default choice for teams that want full control over their workflow infrastructure. It is open-source, self-hostable, and supports over 400 integrations out of the box.

For AI workflows specifically, n8n added native nodes for LLM chains, vector stores, and tool-calling agents in late 2025. You can build a complete RAG pipeline, connect it to a webhook trigger, and expose the entire thing as a single API endpoint. The workflow execution API lets you trigger any workflow programmatically with custom input data and receive structured JSON responses. Teams already using n8n for video generation automation can extend those same flows with headless API triggers.

- Strength: Full self-hosting, massive community, no vendor lock-in

- Weakness: UI can feel cluttered for complex multi-branch AI logic

- Best for: Teams with DevOps capacity who want complete infrastructure ownership

ComfyUI: Headless Image and Video Pipelines

ComfyUI operates differently from general-purpose workflow tools. It is purpose-built for image and video generation, offering a node-based graph system that compiles down to optimized execution plans. The headless angle comes from its API mode, which lets you submit workflow JSON via HTTP and receive generated assets back.

In 2026, several cloud providers (Replicate, FAL, RunComfy) offer hosted ComfyUI instances with API access, eliminating the GPU management burden. Wireflow AI takes a similar node-based approach but adds REST API endpoints natively, making it simpler to integrate visual pipelines into production applications without a separate hosting layer.

- Strength: Unmatched flexibility for image/video pipelines, huge model ecosystem

- Weakness: Steep learning curve, no built-in auth or rate limiting for API mode

- Best for: AI image/video teams who need granular control over generation parameters

BuildShip: Low-Code with API-First Design

BuildShip sits at the intersection of low-code and headless. Every workflow you create automatically gets a deployed API endpoint. The platform runs on Google Cloud infrastructure and handles scaling, cold starts, and execution logging without configuration.

What sets BuildShip apart is its AI-native node library. It ships with pre-built nodes for AI content generation, vector database queries, structured output parsing, and multi-model routing. You can go from a visual prototype to a production API in a single afternoon.

- Strength: Instant API deployment, managed infrastructure, generous free tier

- Weakness: Less control over execution environment, limited self-hosting

- Best for: Small teams and solo developers who need fast API deployment without DevOps overhead

Pipedream: Event-Driven AI Orchestration

Pipedream started as an integration platform but has evolved into a capable AI workflow runtime. Its serverless execution model means you write Node.js or Python steps that run on triggers (webhooks, cron, events) and can chain any AI model call.

The headless aspect is strong here. Every Pipedream workflow is accessible via HTTP, and the platform handles queuing, retries, and dead-letter processing. For teams building AI video generation pipelines that need to handle bursty traffic patterns, Pipedream's auto-scaling is useful.

- Strength: Native code steps (not just visual), excellent event handling, 1000+ integrations

- Weakness: AI-specific features are newer and less mature than dedicated platforms

- Best for: Backend developers who prefer writing code but want managed infrastructure

Activepieces: Open-Source Alternative with AI Focus

Activepieces is the newer open-source contender that has gained traction specifically for AI workflows. It offers a cleaner UI than n8n, self-hosting via Docker, and a growing library of AI-focused pieces (their term for nodes).

The platform's API lets you trigger flows, manage versions, and retrieve execution logs programmatically. For teams evaluating enterprise AI tools and wanting something they can audit and modify, Activepieces provides full source access with an MIT license.

- Strength: Clean design, MIT license, active development pace

- Weakness: Smaller ecosystem than n8n, fewer enterprise features

- Best for: Teams who want open-source simplicity without n8n's complexity

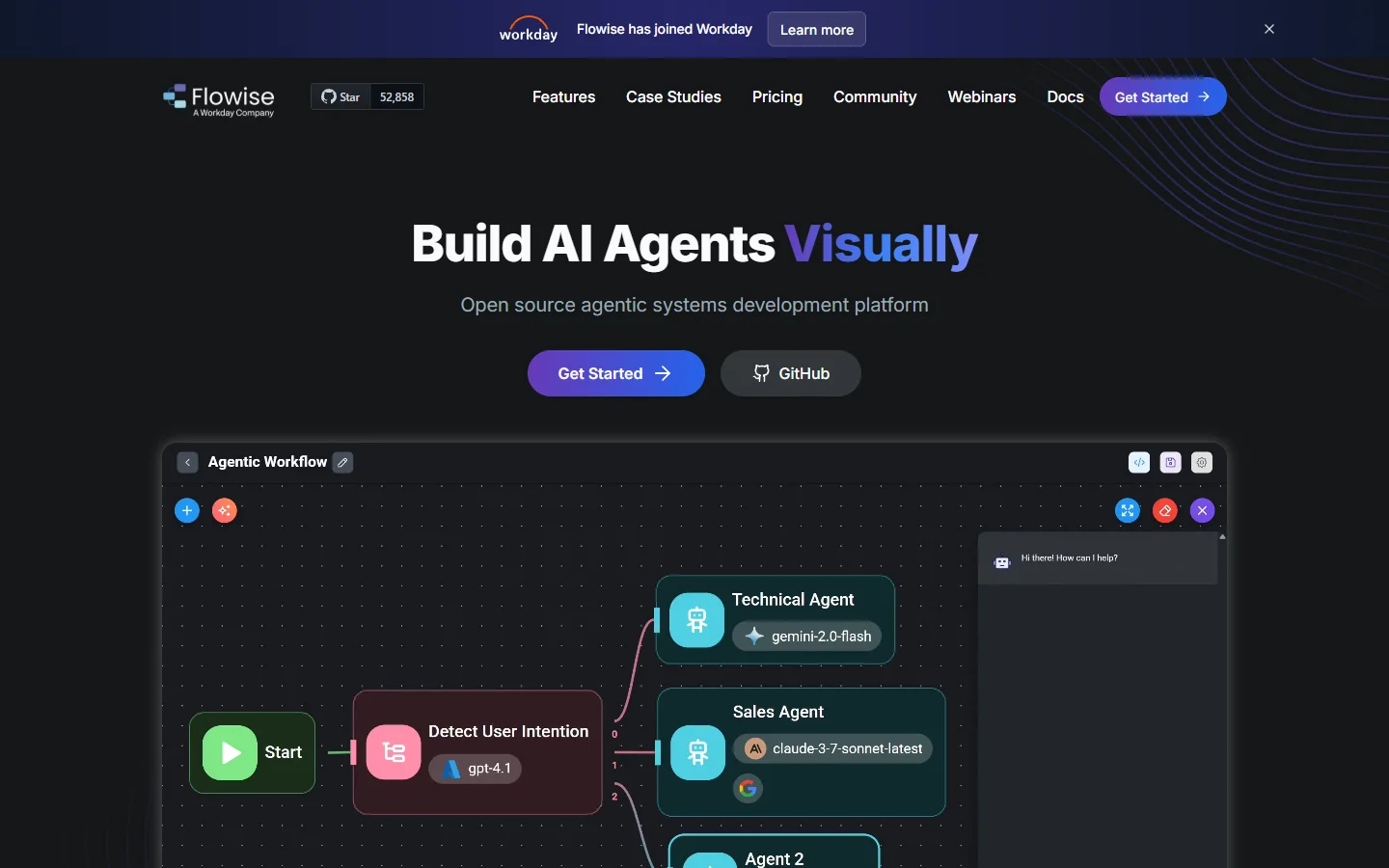

Flowise: LLM-First Headless Orchestration

Flowise focuses exclusively on LLM application workflows. It provides a visual builder for chains, agents, and RAG pipelines, with every flow automatically exposed as an API endpoint. The tool integrates with LangChain and LlamaIndex under the hood, giving you access to their full ecosystem.

For teams building conversational AI, document processing, or content generation backends, Flowise offers the fastest path from prototype to deployed API. Self-hosting is straightforward via Docker or npm.

- Strength: Purpose-built for LLM apps, LangChain integration, simple self-hosting

- Weakness: Limited to text/LLM workflows, not suitable for image/video pipelines

- Best for: Teams building chatbots, RAG systems, or document processing APIs

How to Choose the Right Platform

The decision comes down to three factors: what kind of AI work you are doing, how much infrastructure you want to manage, and whether you need the visual AI pipeline builder experience for prototyping.

For image and video generation pipelines that need API access, ComfyUI (self-hosted or cloud) and dedicated AI image editing suite platforms give you the most control over generation parameters. For LLM orchestration, Flowise or BuildShip get you to production faster. For general-purpose automation that includes AI steps, n8n or Activepieces provide the broadest integration coverage.

Consider starting with a hosted option to validate your architecture, then migrating to self-hosted once you understand your performance and cost requirements. Most of these platforms support workflow export, so you are not locked in permanently. For a broader look at how these tools compare in video-specific use cases, see this AI video generators comparison.

Frequently Asked Questions

What is a headless AI workflow platform?

A headless AI workflow platform provides the backend execution engine for AI pipelines without a fixed frontend. You interact with it via APIs, webhooks, or SDKs, and build your own UI or integrate it into existing applications and tools.

Can I self-host these platforms?

n8n, ComfyUI, Activepieces, and Flowise all support self-hosting. BuildShip and Pipedream are cloud-only but offer private deployment options for enterprise customers.

Which platform is best for image generation workflows?

ComfyUI is the most capable for complex image and video pipelines due to its node-based graph system and deep integration with Stable Diffusion, FLUX, and other models. For simpler image generation needs with API access, BuildShip or general workflow tools with AI nodes work well.

Do these platforms support multi-model routing?

Yes. n8n, BuildShip, and Pipedream all support calling multiple AI models within a single workflow. You can route requests to different models based on input type, cost thresholds, or latency requirements.

How do headless platforms handle GPU-intensive workloads?

Most platforms offload GPU work to external providers (Replicate, FAL, RunPod) via API calls rather than running models locally. ComfyUI is the exception, as it can run directly on GPU hardware. Self-hosted n8n and Flowise can also connect to local GPU resources for tasks like AI avatar generation.

What about observability and debugging?

All platforms listed here provide execution logs and step-by-step traces. n8n and Pipedream offer the most detailed observability, with per-step timing, input/output inspection, and error replay capabilities.

Are there free tiers available?

n8n (self-hosted), ComfyUI, Activepieces, and Flowise are fully free and open-source. BuildShip offers a free tier with limited executions. Pipedream provides a generous free tier with 10,000 daily invocations.